Beyond the Chatbot: Escaping the "Groundhog Day" Loop with Agentic Memory

How to move from stateless LLMs to stateful Agents using MongoDB as the brain's memory layer.

The Problem: Living in "Groundhog Day"

Interacting with most modern AI agents feels like living in the movie Groundhog Day.

Every time you open a new chat window, the alarm clock goes off, Sonny & Cher start singing, and the world resets. You are Phil Connors (the user), fully aware of your history, your project requirements, and your past decisions. But the AI is like the townspeople of Punxsutawney. It greets you with the exact same blank stare it had yesterday.

"Bing! Hello! I am an AI assistant. How can I help you today?"

You sigh, and for the hundredth time, you paste in your project context, explain your coding standards, and remind it that you are using Python, not Java.

The Shift to Agentic AI

We’ve all mastered the art of chatting with the "Stateless Chatbot." You know the type: cheerful, polite, and completely incapable of remembering your name five minutes later. It’s like the friendly B&B owner who introduces himself to you every single morning at breakfast. Charming? Sure. Efficient? Not exactly.

Now, we’re entering the era of Agentic AI—systems that don't just talk, but actually do things with the ability to reason, plan, and act over time.

But here’s the catch: to move from a forgetful townsperson to an expert who masters the loop, your agent needs more than just a high IQ. It needs a memory that survives the browser tab closing. After all, intelligence without memory isn’t really intelligence—it’s just very convincing improvisation.

The Architecture of Memory

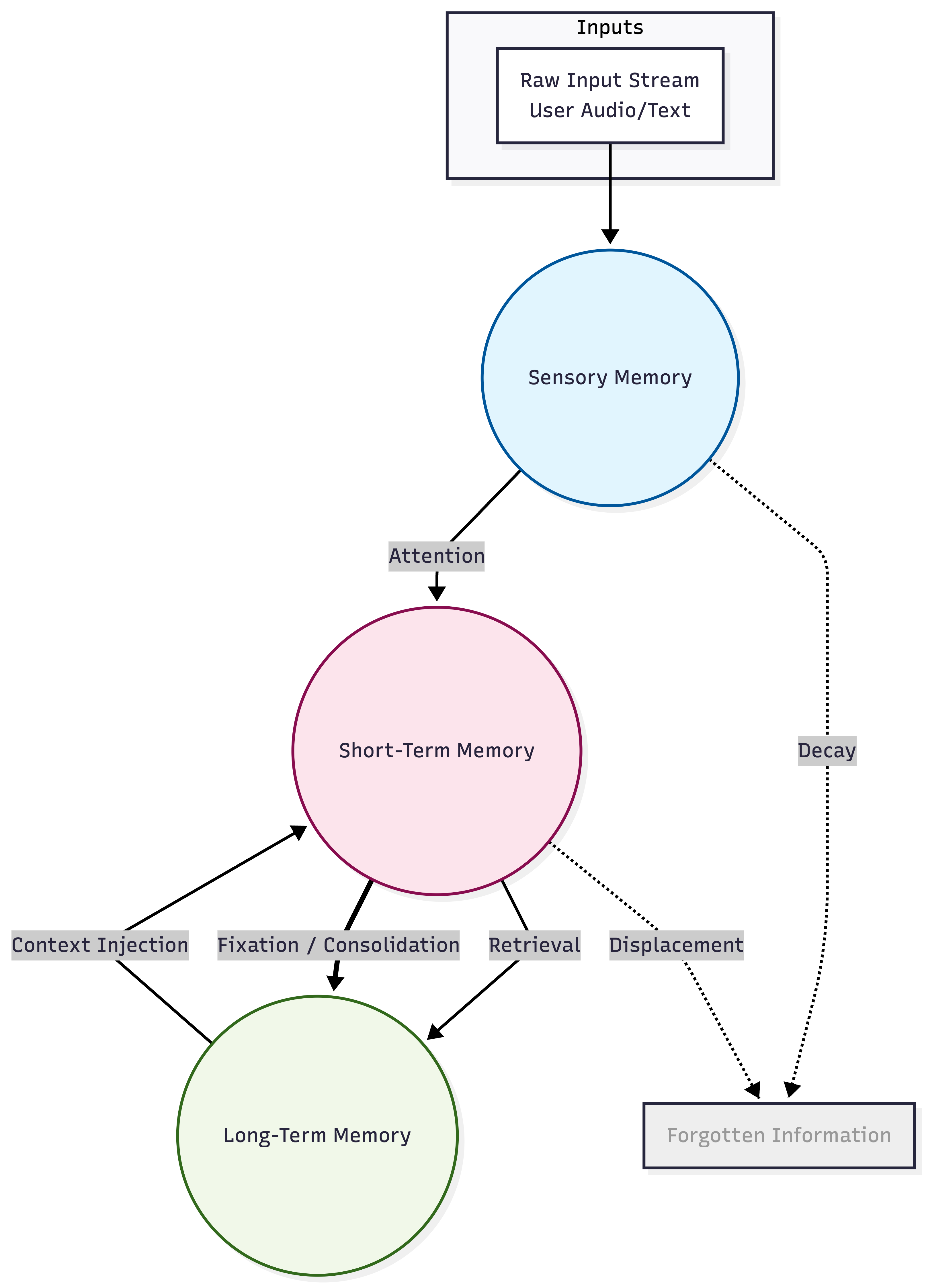

To build a truly intelligent agent, we have to replicate the human memory process. In psychology, memory isn't a single bucket; it's a flow.

When we translate this diagram into an AI architecture, it looks like this:

- Sensory Memory (The Context Window): This is the raw input stream—the user’s prompt, the GitHub webhook, or the error log. It is fleeting and exists only while the LLM is processing the current request.

- Short-Term Memory (Session History): This is the back-and-forth of this specific conversation. It resides in a cache. It’s detailed but temporary.

- Long-Term Memory (The Database): This is where MongoDB comes in. It stores Episodic Memory (past events) and Semantic Memory (facts/knowledge) that persist forever.

The magic happens in the arrow labeled "Consolidation." Just as humans consolidate memories while they sleep—discarding the noise and keeping the signal—AI Agents need a process to move critical context from the "Session" to the "Database."

Use Case 1: The Consumer Agent (The "Personal Shopper")

Let’s look at an accessible example first. Imagine a shopping assistant for a user named Sarah. A stateless chatbot sees "Sarah." A stateful Agent sees a "Single View" of Sarah that combines her profile, her past behavior, and her inferred preferences.

Instead of stitching together five different SQL tables (one for users, one for logs, one for vector embeddings), a MongoDB-backed agent retrieves a single Context Object like this:

{

"_id": "user_12345",

"name": "Sarah Chen",

"subscription_tier": "premium",

// STRUCTURED MEMORY (Facts)

"facts": {

"dietary_restrictions": ["gluten-free"],

"last_active_project": "Kitchen Remodel"

},

// EPISODIC MEMORY (Vectorized Interactions)

"memory_fragments": [

{

"date": "2023-10-27",

"summary": "User had trouble with thermostat installation. Resolved by resetting C-wire.",

"embedding": [0.012, -0.054, ...], // Allows vector search

"tags": ["support", "hardware"]

}

]

}

The "Aha!" Moment

When Sarah asks, "Will these motorized blinds work with my other devices?", the Agent doesn't need to ask "What devices?"

It runs a vector search on her memory_fragments, finds the thermostat installation from October, and replies: "Yes, these blinds are compatible with the thermostat you installed last month."

Use Case 2: The Enterprise Agent (The SDLC Developer)

Now, let’s level up. How does this apply to a complex Software Development Lifecycle (SDLC)?

Enterprise teams are building "SDLC agents" to automate coding tasks. For example, they might use a suite of tools: GitLab for code, Figma for design, and Maestro for orchestration.

In this environment, "forgetting" is expensive. If an agent forgets a design constraint or an API change, it generates broken code. The Agent needs a Project Brain that links all these tools together.

This is where the MongoDB MCP (Model Context Protocol) Server shines. It acts as the "Memory Port" for the agent.

The "Feature Context" Object

Just like Sarah had a user profile, a software feature has a "Feature Context." The Agent retrieves this before writing a single line of code.

{

"_id": "feat_8823_dark_mode",

"project": "mobile-app-v2",

"status": "in_development",

// STRUCTURED MEMORY (The Toolchain Links)

"resources": {

"gitlab_issue_id": "4022",

"gitlab_branch": "feat/dark-mode-ui",

"figma_file_key": "XyZ123... (Frame: Settings_UI)"

},

// SEMANTIC MEMORY (The "Why" & "How")

"architectural_constraints": [

{

"source": "ADR_004_Theming",

"text": "All color tokens must use the 'DesignSystem.Colors' enum. Do not hardcode hex values.",

"embedding": [0.041, -0.882, ...]

}

],

// EPISODIC MEMORY (The Agent's Scratchpad)

"agent_log": {

"last_action": "Checked Figma variables",

"blocker": "Waiting for Design Lead to clarify 'system_default' icon."

}

}

The Workflow in Action

- Trigger: A developer types in Teams: "Update the payment modal."

- Memory Retrieval (MongoDB MCP): The Agent queries MongoDB and retrieves the document above. It "remembers" that payment logic requires strict validation (from a past Architectural Decision Record).

- Visual Context (Figma MCP): It pulls the latest specs from Figma.

- Action (GitLab MCP): It writes code that respects the architectural constraints found in memory.

- Consolidation: It updates the MongoDB document with the result, so the next agent knows exactly what changed.

How to Build It: The Consolidation Workflow

So, how do we move data from the "Chat" to the "Database" without creating a mess? We engineer the Consolidation step from the diagram.

Step 1: The Session Buffer (Short-Term) Store raw conversation logs in a lightweight cache or temporary collection. This is your "noisy" stream.

Step 2: The "Dreaming" Phase (Extraction) Periodically (e.g., at the end of a session or sprint task), trigger a background LLM process.

- Input: The raw chat transcript.

- Prompt: "Extract key facts (bugs, decisions, preferences) and summarize the outcome."

Step 3: The Update (Long-Term) Write the result to your MongoDB "Golden Record."

- Structured Data: Update fields like

statusorpreferences. - Vector Data: Embed the summary and push it to the

memory_fragmentsarray for future semantic search.

Conclusion: Breaking the Loop

We are moving from Chatbots (Input Output) to Cognitive Architectures (Perception Memory Retrieval Reasoning Action).

Your model (GPT-4, Claude, Llama) is the CPU. It processes information. But MongoDB is the RAM and Hard Drive. It gives the CPU the context it needs to actually be useful.

Whether you are building a personal shopper for "Sarah" or an autonomous developer for your DevOps pipeline, the success of your agent depends on the quality of its memory. It is the only way to escape the Groundhog Day loop and build agents that actually learn.